How to Boost Your Apps’ Performance with Asyncio: A Practical Guide for Python Developers

Most applications today rely heavily on input/output (I/O) operations. This type of operation includes downloading a web page’s content from the Internet, communicating with microservices over a network, or running multiple SQL queries against a database. As a result, the performance of our applications can be severely impacted by the time it takes to complete the various I/O operations. However, we can avoid this type of issue by using concurrency in our applications.

Asyncio was added to Python 3.4 as another way of managing highly concurrent workloads in addition to multithreading and multiprocessing.

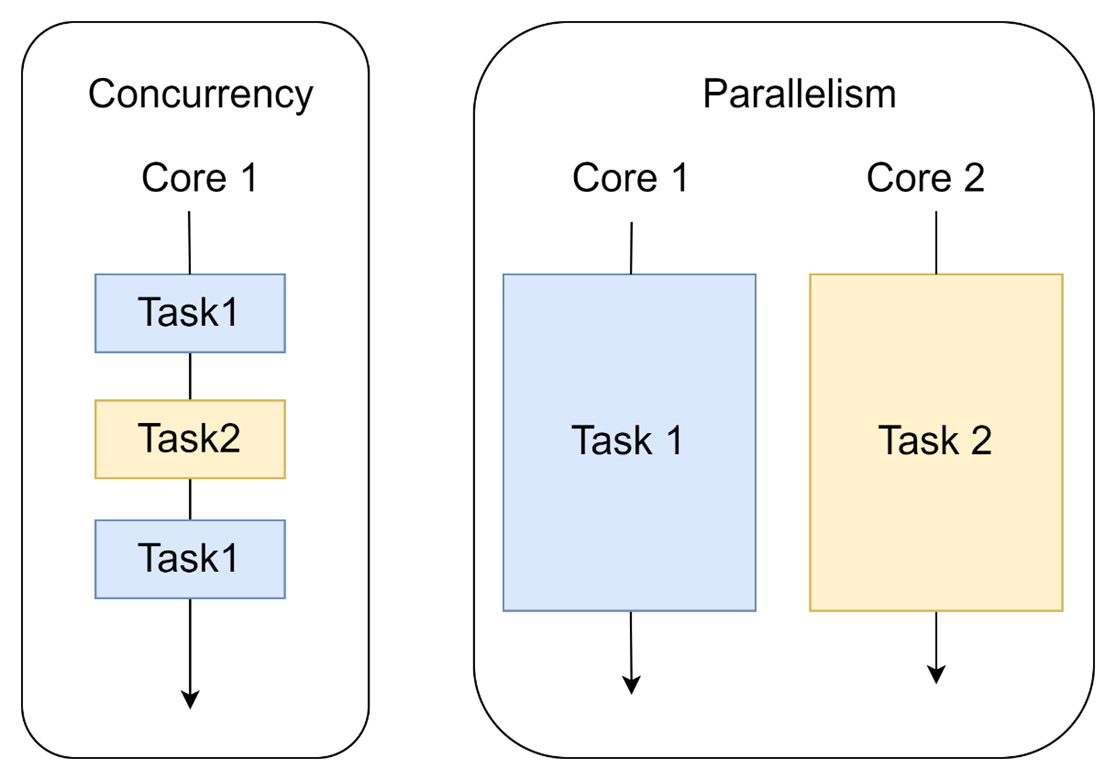

Parallelism vs. Concurrency

People often use the terms concurrency and parallelism interchangeably. But these two concepts are not the same. Parallelism is the ability to run tasks on different processors simultaneously. It is an approach used for tasks that require a lot of computing power (CPU-bound tasks). Concurrency, on the other hand, is the ability to run tasks in any order at the same time. It is the synchronization of processes on a single processor by using shared memory. This method is used in I/O operations, where the time spent waiting for one operation can be used to run other tasks.

Illustration of how parallelism differs from concurrency

Asyncio Presentation

What Problem Is Asyncio Trying to Solve?

Asyncio solves asynchronous programming problems with I/O workloads. There are two reasons why asynchronous programming is better for concurrency than thread-based concurrency:

- Asyncio is a safer alternative to threaded applications because it avoids bugs, race conditions, and other common non-deterministic risks.

- Asyncio makes it easy to handle thousands of socket connections simultaneously. It also supports long-running connections like WebSockets or MQ Telemetry Transport (MQTT) for Internet of Things (IoT) applications.

Before we move on, it’s important to understand some key concepts, such as the Global Interpreter Lock (GIL), race conditions, preemptive multitasking and cooperative multitasking.

The GIL makes the Python interpreter code thread-safe. This means that the interpreter can be locked to make sure that only a single Python thread is running at a time, even if more than one thread is active. You’re probably wondering why it even exists. Memory management in CPython is the answer. This is mainly handled by a method called reference counting, which lets an object’s memory be automatically allocated or freed up. This method gives each object its own block of memory, and a reference count keeps track of the number of references to that object. When the reference count drops to zero, that object’s memory is automatically freed up.

Race conditions occur when two threads need to reference a Python object at the same time. We call this a non-thread-safe code, which can lead to erroneous and unexpected states.

Preemptive multitasking is the ability of a multitasking operating system to stop one task to perform another.

Cooperative multitasking is when programming in the code determines when to switch from one task to another instead of the operating system (OS).

Asyncio Myths Busted

“Asyncio solves problems with the GIL.” This is not true because asyncio is not even affected by the GIL. Asyncio is single-threaded by definition, while the GIL mainly affects multithreaded programs.

“Asyncio prevents race conditions.” False. Race conditions are always a risk in concurrent programming. However, asyncio does eliminate problems associated with access to the shared memory for the processes, which often occur with threads.

“Concurrent programming is easier and faster with asyncio than with threads.” Not really. Threads are sometimes faster, but with asyncio, you have more control over the code and only have to manage a single thread. This also makes debugging easier if something goes wrong.

Asyncio’s Components

The asyncio documentation might look intimidating at first glance. All you need to know is that there are application programming interfaces (APIs) for Framework design and others for everyday development. There is a subset of APIs for the following tasks for everyday use:

- Starting an asyncio event loop

- Calling async/await functions

- Creating tasks to be executed in the event loop

- Waiting for all tasks to be completed

- Closing the event loop when all tasks have finished

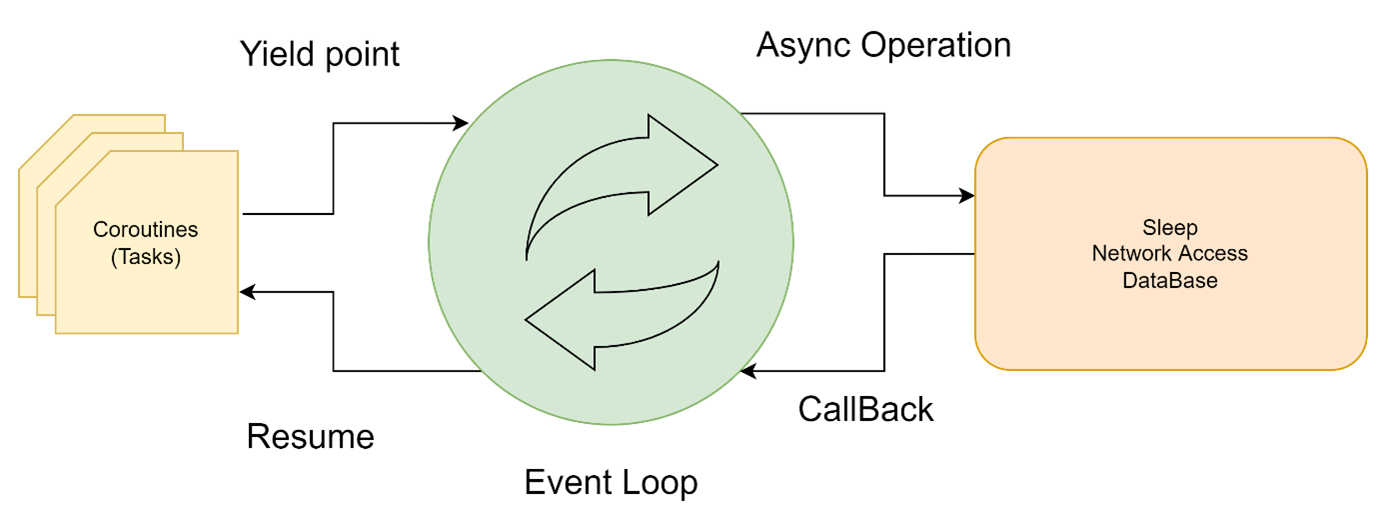

Event Loop

The event loop is the heart of the asyncio library in Python. It is responsible for managing and executing asynchronous tasks and callbacks. Essentially, this loop continually checks for tasks to execute. When there are no tasks to run, the event loop waits for new tasks to become available. This allows multiple tasks to be executed in parallel without stopping the program from running.

Coroutines

A coroutine is simply a Python function that can pause its execution when it encounters a time-consuming operation. Once the operation is done, we can “wake up” our paused coroutine. We must use the async and await keywords to create and pause a coroutine.

Tasks and Futures Classes

The Future class is a superclass of Task that provides specific functions for interacting with the event loop. To differentiate between these two classes, think of the Future class as the completion state an activity will reach in the future. It is managed by the event loop (if you are familiar with JavaScript, it is the equivalent of a “promise.” The Task class is similar but is only used for an activity created using the create_task() function and is a coroutine.

The Awaitable class inheritance hierarchy

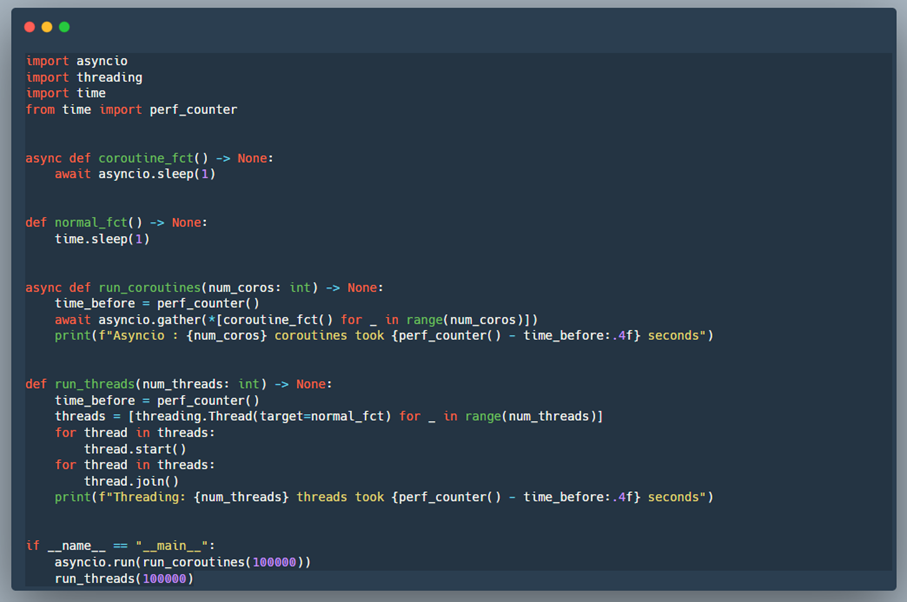

Practical Example

Discussion About Asyncio

As we saw in the example above, asyncio is ten times more powerful than threading. Asyncio is faster than threading between threads because it can switch between coroutines more efficiently.

When a coroutine calls ‘await asyncio.sleep(1)’, it gives control back to the event loop, which can then switch to another coroutine that is ready to be executed. This means multiple coroutines can run at the same time in a single thread without having to switch between threads.

On the other hand, when a thread calls ‘time.sleep(1)’, it blocks the entire thread and prevents any other thread from running at the same time. This means that multiple threads must be created and managed. This can involve huge costs (hence the negative effect of the GIL on multithreading).

Note that the difference in performance between asyncio and threading will depend on the specific use case. In some instances, threading may be faster or more appropriate than asyncio. However, if you want to run many tasks at once without I/O blocking, asyncio may be a better choice than threading.

Comparison: Asyncio vs. Threading

| Asyncio | Threading |

| Event-driven (asynchronous) programming | Procedural programming and object-oriented programming (OOP) |

| Coroutine-based concurrency | Thread-based concurrency |

| Lighter than threads | Heavier than coroutines |

| No GIL | Limited by the GIL |

| Non-blocking I/O | Blocking I/O |

| >100,000 tasks | 100–1000 tasks |

How to Choose between Asyncio, Threading, and Multiprocessing?

I/O bound problem: use asyncio if the libraries you are using support it. If not, use threading.

CPU-bound problem: use multiprocessing.

| Operation | Parallel | Shared memory | Stop possible? | |

| Asyncio | I/O | No | Yes | Yes |

| Threading | I/O | No | Yes | No |

| Multiprocessing | CPU | Yes | No | Yes |

Do you need help with your digital transformation projects? Contact us!

Want to learn more about Craftsmanship? Read our Craft Month posts:

- Is the Craft Still Relevant?

- How to Choose the Best Software Architecture with Architectural Drivers?

- How to Build an Infrastructure with Terraform?

- Craft and PowerShell: Why Software Engineering Practices Need to Be Applied to Infrastructure

- PySpark Unit Test Best Practices

- Telemetry: Ensuring Code That Works