Digital Factory: Which Technical Foundation?

Post co-written by Laurent Yin and Aly-Bocar Cissé (Cellenza) & Eric Grenon and Elliott Pierret (Microsoft)

A solid and scalable technical foundation is required to build digital factories that meet your entity’s strategic objectives.

Cloud Architectures for Time-To-Market

The main concern is time-to-market. If a Digital Factory cannot meet users’ expectations by creating digital products at a consistent rate, the initiative will fail. To ensure that the technique aligns with the identified business challenges, it’s critical to take your time and be methodical. As a result, cloud-centric architectures are the natural choice in today’s IT environment. Digital factories require instant resource allocation, automated deployment functions, and resource scalability. In addition, instead of being a security breach, as was suggested when the cloud emerged, the current trend is to use platform convergence as an opportunity to impose security rules over the medium and long term.

Properly organizing cloud resources is an excellent place to start for the technical foundation. This is referred to as the cloud landing zone. This allows us to have a plan that serves as the foundation for the cloud resources we deploy. This is especially important for digital factories. The network, security, consistency, and governance are critical in defining this landing zone, as they are in any urbanization plan with a long-term vision. This is the basis for container or serverless architecture deployment.

A Scalable Network Base for the Technical Foundation

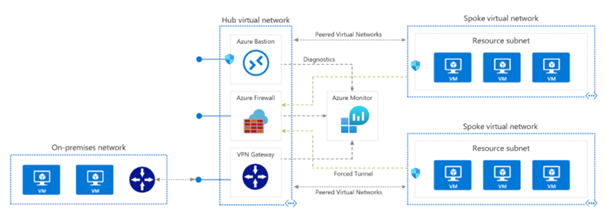

The hub-and-spoke model is becoming increasingly important for the technical organization of the cloud. Three aspects of this style of architecture stand out:

- Connectivity

- Security

- Scalability

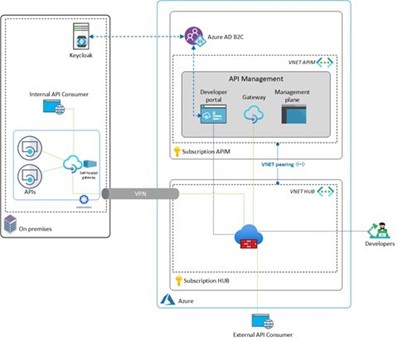

The interactions between the Digital Factory and the information system are the focus of this topology. This eliminates the possibility of a “shadow” platform that is separate from the information system and has no governance. The hub is the central component, ensuring connectivity between the Cloud and the on-premises deployed resources, among other things. The digital factory applications will need to connect to the hub rather than the on-premises network. These are called spokes. This way, connectivity is shared, saving time and money. Management is also more transparent, and the various spokes are separated so that they do not affect each other.

This configuration improves security by allowing security to be imposed on the hub, which is an essential component of this “star” structure. As a result, you can install a firewall or other shareable components such as DNSs or bastions that act as relays when connecting to a resource deployed in the hub and spoke model. Supervision or security systems like Security Information and Event Management (SIEM) can intervene at this level, allowing for global and central control.

There are advantages in terms of scalability, in that a spoke can be added without affecting the others. The resources deployed in this spoke are then part of a defined perimeter that does not affect the resources of other projects, for example. This ensures the digital factory’s scalability, which reflects the ambitions of this type of platform.

On Microsoft’s cloud, this architecture is delivered using virtual networks linked together via VNET peering. It’s critical to anticipate future IP ranges during the design phase, at least in the medium term. This configuration is possible even if the virtual networks are declared in different subscriptions, creating autonomy between the hub and the spokes. By combining network, security, and routing configurations into a single interface, Azure Virtual WAN simplifies this large-scale configuration.

Azure ExpressRoute is the best choice for connectivity between the cloud and your on-premises instances, to give you a private network link. The hub’s virtual network is then connected to the on-premises network. Note that a VPN can also be used, but a private link is preferable. Each spoke connected to the hub is then connected to the internal resources. User Defined Routes in Azure can help by allowing network traffic to be routed to the correct destinations.

Configuring network security groups provides control over incoming and outgoing network flows. Security on VNETs can be strengthened with an Azure Firewall component installed at the hub level. This centralizes network operations that contribute to the hub-and-spoke architecture security. Azure Monitor can be configured as a shared component at the hub level to collect platform logs. This enables automated alerting.

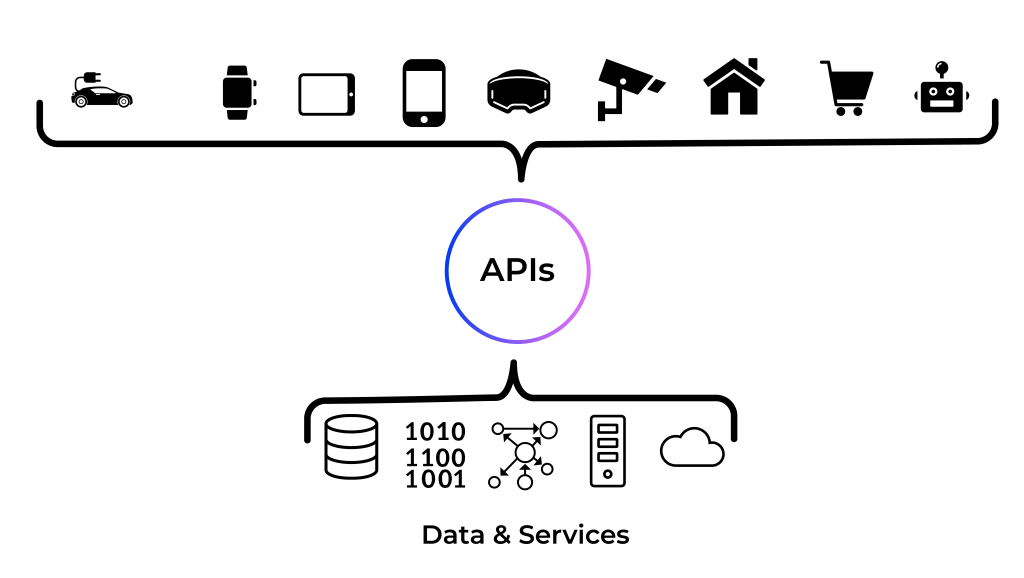

Integrate the Application Dimension

Aside from organizing the relevant network components for digital factory scalability, it’s important to consider implementing digital solutions at scale. This usually equates to an API-based approach that allows various clients (PCs, mobiles, connected objects, apps, etc.) to retrieve data and trigger operations through a unified framework.

Information systems rely on a key component to manage these APIs efficiently: API management. With API management, a middleware providing these features, APIs can be exposed securely and governed without prior development. You can also streamline the management of your consumer applications and track API usage to improve their promotion. API monetization is used in some cases in API management.

Because this component is global, it’s typically positioned as a shared service within the information system. It has a place in the digital factory due to its value. In Azure, for example, the Azure API management service can be assigned a dedicated spoke that all the resources deployed in the hub-and-spoke architecture can rely on. The API management can be instantiated in a virtual network and have the same connectivity to the on-premises resources.

Spokes are frequently instantiated with Platform as a Service (PaaS) resources to supplement the standard application architecture and focus on time-to-market and value generation. This saves time and effort on technical infrastructure maintenance. Cloud providers also value the PaaS model. The App Service is used in most Azure architectures to deploy web applications and compute resources. Azure SQL can store SQL data, Azure Cosmos DB can store NoSQL data, and Azure Storage can store unstructured data. Azure Application Gateway can be configured as a web application firewall for increased application security. Azure Key Vault is a tool for managing secrets. Finally, Application Insights and Log Analytics are recommended for good operability with monitoring.

The goal of digital factories is also to innovate, with the ultimate aim of industrializing services and deploying them in production. Recent technological trends have spawned topics surrounding data processing and flows, serverless computing, and even containers. The cloud must be used to foster these opportunities, whether through containers (Container as a Service, CaaS), functions (Function as a Service, FaaS), or cloud-based integration flows (Integration Platform as a Service, iPaaS). We can build impactful digital factories that control maintenance management by leveraging these new possibilities and deploying them on a large scale. To accomplish this, Azure provides natively managed cloud services such as Azure Kubernetes Service, Azure Container Registry, Azure Functions, Azure Service Bus, Azure Data Factory, and Azure DataBricks, which give us tools for these approaches and reinforce the technical foundation. Deepening the industrialization of Internet of Things (IoT) platforms is also important.

All this means that the cloud must provide the flexibility and services required to best facilitate digital initiatives within the company while also responding to an ever-increasing range of requirements. Creating dashboards to track services and usage helps coordinate these initiatives. This also helps to fuel the dynamism that digital factories require.

Integrated Security Tools

The digital factory is a strategic component of the company, so security and compliance must be prioritized. The attack surface is growing due to the openness and technological diversity of the information system. Continuous evaluation methods are essential to ensure the long-term viability of digital factory projects. Penetration testing by reputable audit bodies is always required, but it must be performed at a specific time and for a particular configuration. The security and compliance of the information system must now be tested more frequently, with security recommendations and alerts issued in near real-time.

To address these concerns, a global solution is required to detect alerts, provide greater visibility into the system’s current state, and respond proactively to reported vulnerabilities. This solution must cover the entire digital factory so that IS security is not compromised when a new resource is added. This is especially true when integrating new technology into the digital factory. Therefore, it’s critical to thoroughly research each resource type before using it as a reference component. Scalability is inherent in the cloud, so it should be part of a digital factory’s security features. Large-scale data collection is then also done to prevent security breaches.

Ignoring detected alerts is not an option. Recommendations for solving the issue must accompany each sensitive event that is triggered. Automation to apply security recommendations is an avenue that needs to be exploited: we embrace DevSecOps practices, where security must evolve to work in tandem with agile development, deployment, and maintenance processes. Responsiveness is even more important because you must be able to respond quickly, for example, when news of an IT vulnerability breaks. Rather than disrupting the initial project milestones when a critical vulnerability emerges, the remedial actions can be readily absorbed if they are already built into the digital factory’s security foundations.

This means that you need to incorporate all security elements, monitoring, supervisory agents, log collection, and threat analysis into the design of your digital factory from the start. The benefit of the cloud is that you can deploy these services easily and benefit from seamless integration.

For example, when deploying virtual machines, you want to be able to verify that they are compliant with best practices instantly. You should also collect event logs and set up antivirus and a firewall, etc. Similarly, if you use containers, you will want to scan the images with something like a vulnerability scanner.

You also need an event collection and centralization system to analyze the signals from all your digital factory services. Ideally, corrective measures should be taken after signal correlation.

You can use two Azure services for this: Microsoft Defender for Cloud and Microsoft Sentinel. Let’s take a closer look at these two services that can be used to secure all aspects of your digital factory.

Microsoft Defender for Cloud

Microsoft Defender for Cloud gives you the tools to harden your resources, monitor your security posture, defend against cyberattacks, and streamline security management. Because Defender for Cloud is natively integrated into Microsoft Azure, it’s easy to deploy and provides simple automatic provisioning to secure your resources by default.

This service meets three security requirements:

- Continuous assessment: to understand current security posture, it shows you your security score based on an analysis of your Azure environments at any time.

- Security recommendations: the tool generates a list of customized and prioritized hardening tasks to help you improve your security posture. You implement these by following the detailed fix steps. Defender for Cloud provides a “Fix” button for many recommendations, allowing for automated implementation!

- Security alerts: when you enable enhanced security features, Defender for Cloud detects threats to your resources and workloads. These alerts appear in the Azure portal, and Defender for Cloud can send them via email. Alerts can also be delivered to SIEM and Security Orchestration, Automation, and Response (SOAR) solutions.

Microsoft Sentinel, the SIEM and SOAR Solution

Microsoft Sentinel provides enterprise-wide threat intelligence and intelligent security analysis. It combines alert detection, threat visibility, proactive hunting, and threat response into a single solution. Artificial intelligence is used to identify suspicious activity and reduce false positives. We know how irrelevant alerts can sabotage digital factory monitoring. So it’s vital to avoid them from the start, beginning with the technical foundation.

Digital Factory Services Catalog

Your digital factory must provide services. These services are for digital factory users. However you build these services, making them available to your users is critical. Your service is available if your users:

- Can find your services even if not notified of their existence

- Have access to your services

- Have access to quality documentation

- Have access to the terms of use for your services

The four points above are essential if we are to consider a “Service Catalog.” The primary “users” here are the developers in your Digital Factory. However, certain services may interest groups other than developers, so we’ll continue to use the term “users.”

Like any other major initiative within a digital factory, the service catalog must be able to manage service integration in an automated manner while also allowing users to deploy these services within their projects.

In reality, achieving this level of maturity takes time due to the following technical issues:

- A consistent authentication policy within your digital factory. Being able to identify all users and resources is critical for security and governance. Any automation strategy is hindered by the inability to identify who subscribes to your service.

- A consistent authorization policy within your digital factory. The security aspect is also obvious here. Managing your users’ permissions is logical, but managing authorizations for your services is less so. To achieve your objectives, your services will undoubtedly rely on other services.

- The integration of your services with your users’ cloud architectures. As previously stated, these architectures are required for security and time to market. Services are divided into two main categories: those that extend the capabilities of your architectures and those that specialize those architectures. In both cases, designing services that are compatible with these architectures is critical if you want them to be used in accordance with your users’ expectations.

- The deployment of your services within projects. It’s common practice to separate designing services that fit into your users’ architectures from being able to deploy them automatically. The term “deploy” is used here in its broadest sense. Some services, for example, will require the opening of network flows, while others will require the implementation of IaaS or PaaS resources. Both cases involve deployment.

- Implementing monitoring tools for your services. In the long term, giving users the ability to understand what happens when they use your services will ease the work of the teams responsible for their support. This also applies to deployment processes. Receiving accurate information about why something fails is always more productive than the infamous “it doesn’t work.”

- The billing aspects associated with your services. Denying users the ability to monitor the cost of a service’s consumption is not recommended.

The above points may have prompted you to consider what cloud portals like Azure can provide. This is no coincidence, considering that the Cloud is an essential component of the digital factory as defined thus far. So, don’t be afraid to take advantage of the platform’s features, such as the Azure Marketplace or the Private Marketplace, to meet your needs. Do not hesitate to use ticketing or IT process management tools to manage service deployment or support processes.

These technical aspects are important but not the only ones to consider. We could also discuss versioning, environment management, and API access to this catalog. In addition, we have addressed the key points from the users’ perspective. The needs of the service providers are important as well. They are, however, heavily reliant on technological and organizational constraints that go beyond the scope of this post. The important thing to remember is that focusing your teams’ efforts on providing a consistent and robust user experience allows you to better frame the needs of your teams that provide/own these services.

Establishing a service catalog is a time-consuming and iterative process. Measure your efforts and only automate what you can control.

Building within the Digital Factory

Aside from human resources and funding, several elements are required to produce initiatives that can be delivered in production. By this, we mean aspects that enable teams to work efficiently while adhering to our industry’s current standards.

In an ideal world, each team would be able to select the tools that best fit their needs. However, you have to be realistic if you don’t want license and maintenance costs to skyrocket. Whatever you choose, make sure you don’t overlook these factors.

Managing Your Initiative

IT initiative tracking is not new. Numerous tools available to meet this need: Azure DevOps, JIRA, Basecamp, etc.

Whichever you choose, keep in mind the following:

- Management of iterative development aspects. Iterative methods are now the industry standard for project management, and they are designed with agility in mind. When selecting a tool, the first requirement should be to ensure that you are familiar with all aspects of the method. Three key questions can help you decide:

- How familiar are your teams with this tool?

- What level of integration do you want with your teams’ other tools?

- What KPIs do you think are necessary for project management and detecting development problems?

- Support for a task’s development stages and its level of detail. The development team must be able to describe the details of the tasks at hand and, perhaps most importantly, represent the stages of the construction process in the tool.

- Supporting team communication. Team communication is vital. Chat solutions such as Microsoft Teams or Slack are popular choices for medium asynchronous (less than one day response) and direct communication. However, we recommend centralizing the decisions that result from these discussions, and the long asynchronous needs and exchanges that can serve as context for the initiative, in this tool.

- Support for documentation needs. All documentation elements must be centralized within the tool.

- Project monitoring reports. Producing all of the KPIs that measure the performance of your teams is a real challenge if you fail to consider these factors when selecting your tool. In the best-case scenario, your chosen tool can produce what you need without adding to your workload. If your need is too specific for the market, look into third-party solutions compatible with your product before investing in custom development.

Source Control and Developer Collaboration Platform

Again, this is nothing new, and many solutions are available: Git, Mercurial, Bazaar, SVN, etc.

All of these solutions have their merits. Even though today’s industry favors distributed source control, with Git leading the way, arguments can be made for each solution without considering more confidential (such as Pijul) and/or commercial solutions.

Beyond these “low-level” solutions, the solution that will allow team members to communicate and collaborate around large code bases will be the deciding factor. Several options exist:

It’s common practice within your Digital Factory to make the source code of your projects available within the organization. These tools integrate with your organization’s authentication systems and provide advanced source code rights management.

These tools are more than just source control tools. They are true collaboration platforms centered around the source code. As a result, they are frequently used to create source code artifacts and deliver them in the associated technical environment.

Managing Digital Factory Artifacts

We have already mentioned collaborative platforms for artifact creation. However, these artifacts have their own life cycle. Therefore, investing early in a solution that properly manages these artifacts is recommended. This is often referred to as an artifact warehouse. Every artifact that is created should ideally be delivered to this artifact warehouse.

Two solutions are market leaders here, owing to their multi-technology aspects:

Note: the solutions mentioned above can also be used for the same purposes, with the only difference being their level of specialization:

Having a centralized repository for artifacts within your organization reduces licensing and maintenance costs. As with any global centralized solution, ensuring high availability and consistent security requires significant effort. However, the benefits of centralizing your artifacts (easily accessible usage metrics, automated processes for your artifacts, etc.) outweigh the costs and security concerns.

Learn more about Digital Factories

You are interested in Digital Factories? You want to know everything on this topic? Read our latest posts:

- Why and how to create a Digital Factory?

- What is the Best Strategy and Approach for Your Digital Factory?

- How Do I Organize Teams in a Digital Factory?

- Financing and Budgeting for a Digital Factory

- Digital Factory: Feedback From Saint-Gobain – Aari

- Digital Factory: Nexan’s Experience