Azure’s Responsible AI Tools: The Responsible AI Dashboard

Post co-written by Fawzi Rida and Nathalie Fouet

With the European Regulation on Artificial Intelligence (AI Act) in place, providers of certain artificial intelligence (AI) systems will have to meet new marketing requirements by 2025.

This post aims to show how you, as a data scientist, can get ready to meet these new obligations.

Why “Responsible AI”?

Responsible AI ensures that an AI product has been developed and will be used ethically, fairly, and transparently. It also ensures the well-being of individuals and society as a whole.

Data scientists usually use evaluation metrics, such as accuracy for classification and Root Mean Squared Error (RMSE) for regression, to determine how well a machine learning (ML) model is performing. But these methods for evaluating ML are not sufficient. Although they are important for evaluating the performance of a model, they have some limitations:

- They do not provide a full picture of how the model will behave in real scenarios.

- They fail to consider the ethical, social, and legal ramifications of using the model.

Let’s imagine a scenario in the medical field. Traditional evaluation methods might find a model highly accurate in detecting tumors. However, responsible AI would also examine whether or not the model is devoid of any biases that could adversely affect certain groups and would explain the reasoning behind its decision-making.

Important note: Responsible AI won’t replace traditional ML model evaluation methods; instead, it will complement them.

In a previous post about these popular tools, we talked about the AI Responsible Dashboard available in Azure Machine Learning (Read the post on the AI Project Run: Managing the Life Cycle of an ML Model)

“Responsible” Artificial Intelligence by Microsoft

How Can Responsible AI Products Be Created?

Two years ago, Microsoft presented its vision for responsible AI in the form of a list of principles:

An overview of Microsoft’s “responsible AI” principles

To be responsible, an AI product must be:

Fair: without bias or discrimination, treating all users fairly.

Our advice:

- Use many different and representative datasets to train ML models.

- Test and monitor the system regularly to detect any bias. We have the Fairlearn package for this, which can easily be added to Azure Machine Learning pipelines.

Explainable: able to explain its decisions.

Our advice:

- Where possible, use easily interpreted models, such as decision trees.

- Provide transparency tools that explain the model’s predictions. There are several packages available, including SHAP, InterpretML, etc.

Safe: designed to be reliable and minimize the risk of error.

Our advice: Use rigorous testing methods like simulated and real-world tests to make sure the system works well under different conditions. The Azure Machine Learning Service includes responsible AI dashboards that contain counterfactual and causal analysis sections for what-if simulations.

Private: protect individuals’ privacy and not violate their rights or expectations.

Our advice:

- Use secure methods of sending and storing data

- Ensure transparency and control data use

Transparent: transparent in its capabilities and limitations.

Our advice:

- Provide clear and accurate information about system performance and reliability.

- Be open and transparent about any issues. Responsible AI dashboards can be turned into PDF reports called Responsible AI Scorecards in the Azure Machine Learning Service. This is a sort of identity card for the model that meets a certain standard and can be understood by technical (data scientists, etc.) and non-technical profiles (project managers, decision-makers, the legal department, etc.).

Responsible: developed and used in an ethical, fair, and transparent way that promotes people’s well-being.

Our advice: involve all stakeholders in the development process and set clear rules and strategies to make sure that the system is used responsibly.

After presenting its Responsible AI vision, Microsoft launched an open-source project called the Responsible AI Toolbox in December 2021. This led to the development of a component in Azure Machine Learning: the Responsible AI Dashboard.

The Responsible AI Dashboard in Azure Machine Learning

The Responsible AI Dashboard provides a single interface where best practices for Responsible AI can be implemented. This dashboard is interactive and adapts to our choices in real time (we can connect it to compute instances and change the features and metrics of focus on the fly to suit our needs).

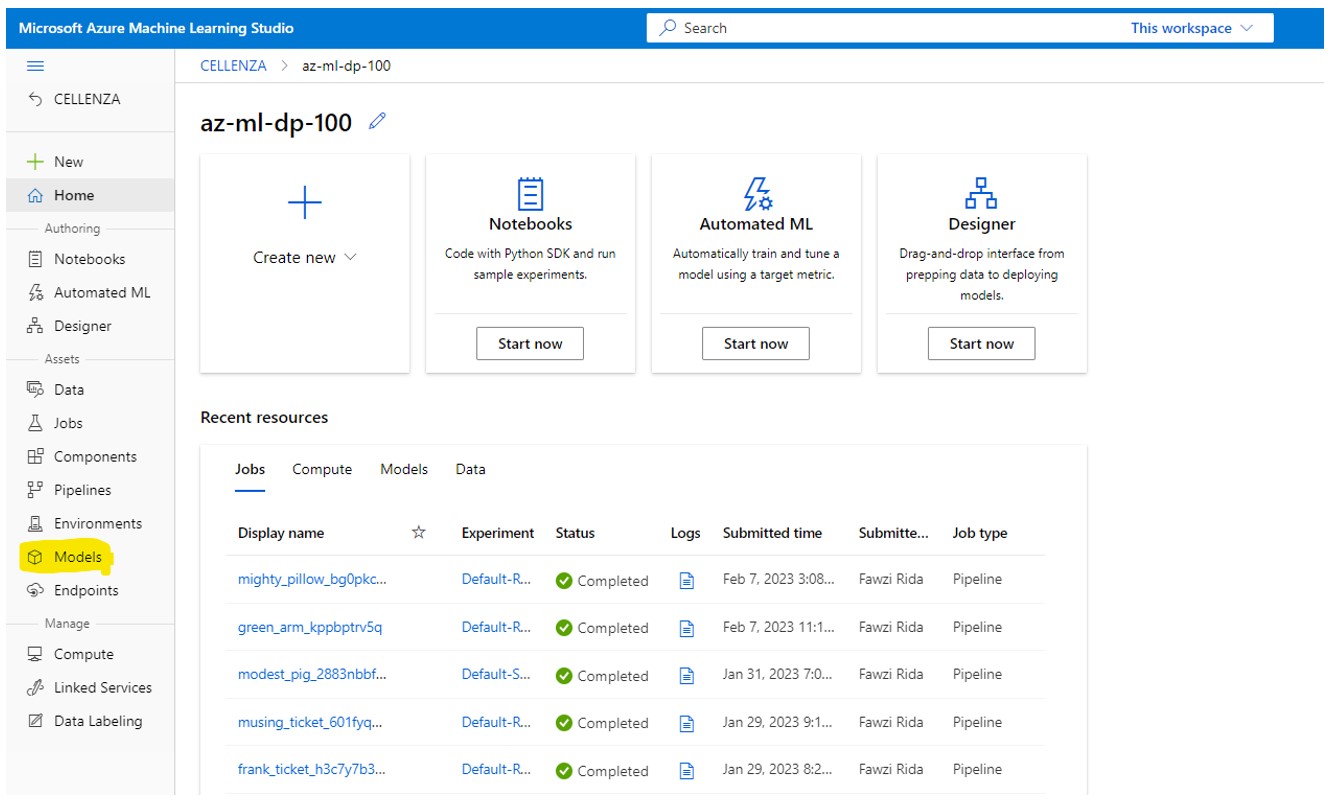

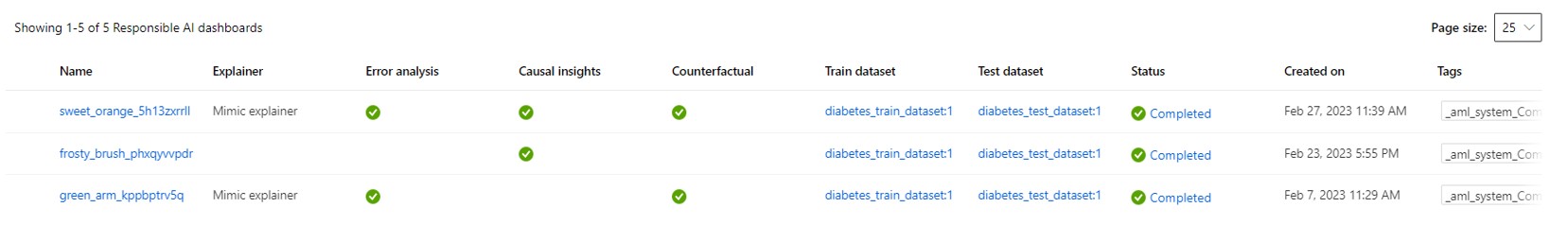

It’s easy to set up this Responsible AI Dashboard for your Azure ML models:

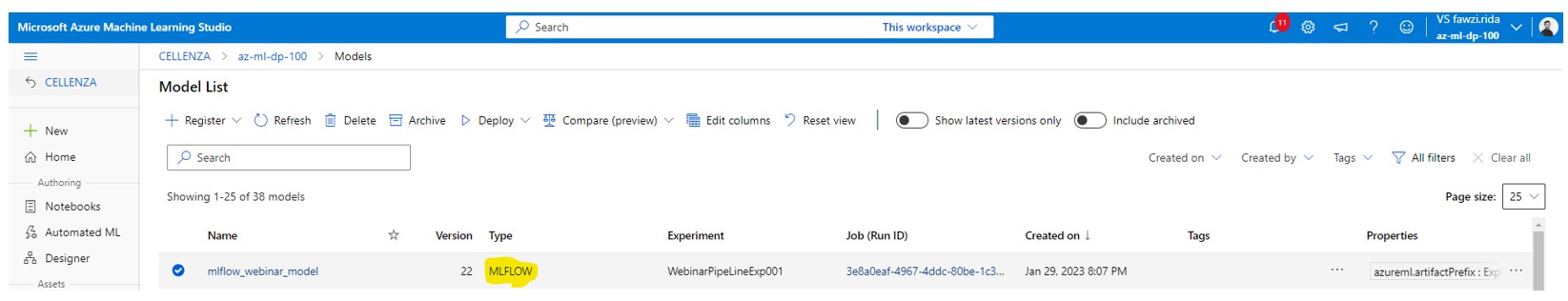

- Start by saving your models in the MLFLOW format in “Models” in the Azure Machine Learning Studio:

- Next, choose the model you want to attach a responsible AI dashboard to:

- Go to Responsible AI and click Create Responsible AI Insights:

- Follow the creation instructions:

- Select the Train and Test datasets and choose the Modeling task:

- The Responsible AI Dashboard has two visualization types:

- Model debugging visualizations: they help you understand and debug an ML model.

- Real-life intervention visualizations: for rigorous testing in what-if scenarios.

- Choose and confirm your choice of visualizations. A job will be created for the dashboard construction phase:

Your Responsible AI Dashboard is ready!

There are six main sections to this Responsible AI Dashboard:

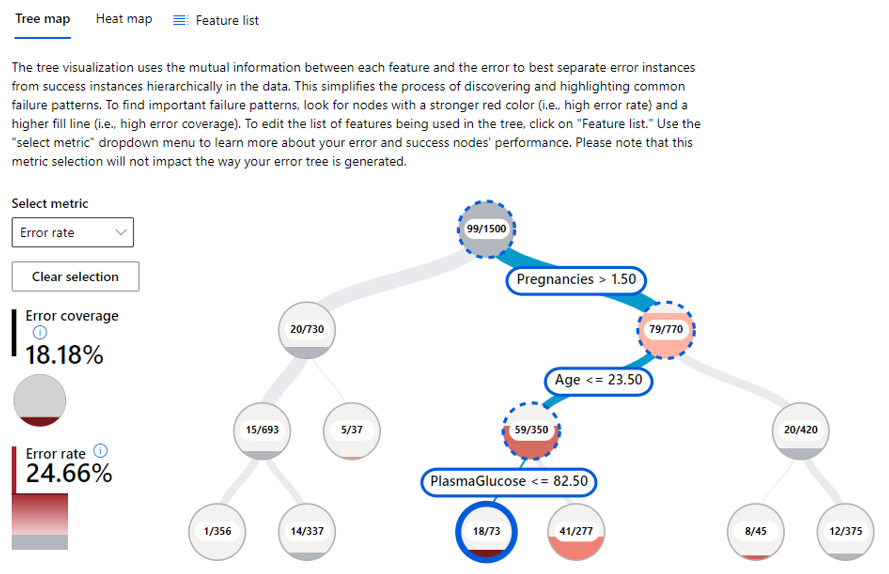

- Section I: Error Analysis

This gives you a better understanding of error distribution in the model and helps you identify erroneous data cohorts.

Illustration Tree Map: Identifies cohorts of data with a higher error rate

Illustration Heat Map: slices the data in a 1D or 2D grid by input features

- Section II: Model overview

Provides an overview of the ML model evaluation metrics and the bias calculation metrics.

Illustration of ML evaluation metrics

- Section III: Data Analysis

Enables statistical exploration of the data and helps identify problems of overrepresentation or underrepresentation in the data.

Illustration of the statistical distribution of data

- Section IV: Feature Importance

Enables diagnostics on ML model predictions so they can be interpreted better.

Illustration of the main features of the ML model

- Section V: Counterfactuals

Allows you to see what the model would predict if you changed the input data. This helps you understand the ML model better and makes debugging easier.

Illustration of counterfactuals

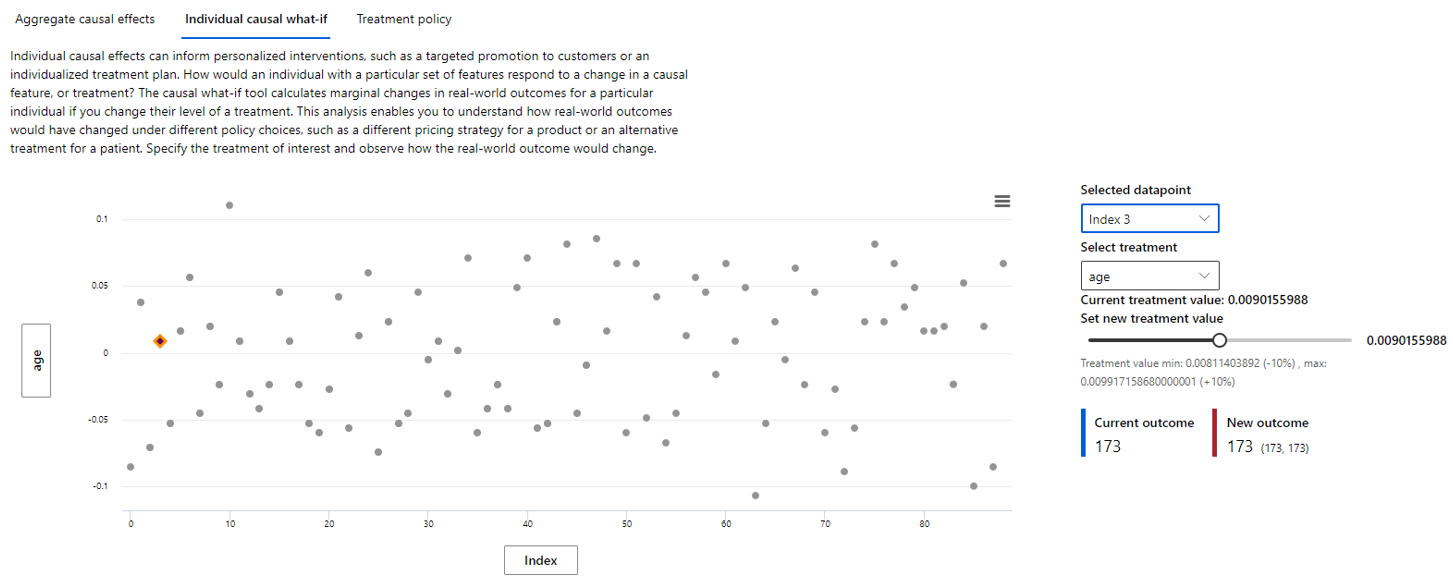

- Section VI: Causal Analysis

Enables an estimation of the impact of a feature on the outcome and the implementation of treatment strategies

-

- Section VI. 1: Aggregate causal effects: Shows the features that have a significant impact on our outputs

Aggregate causal effects illustration

-

- Section VI. 2: Individual causal what-if (visualization in a regression model case): Shows the change to a data point’s feature and how it affects the output (current vs. new)

Individual causal what-if illustration

-

- Section VI. 3: Treatment policy: Shows the treatment strategy to be applied to a so-called sensitive feature (one with a significant impact on our outputs)

Treatment policy illustration

There you have the various visualizations available in the dashboard.

The icing on the cake is that you can transform this dashboard into a PDF format report (a sort of model identity card) that can be shared easily between teams, with your project manager, or with your legal department! To create a scorecard, select an existing dashboard and click Generate new PDF scorecard.

Below are some of the visualizations shown on the scorecard:

For more information about this dashboard or the scorecard document, have a look at the Microsoft documentation.

Do you need help with your responsible AI projects? Contact us!